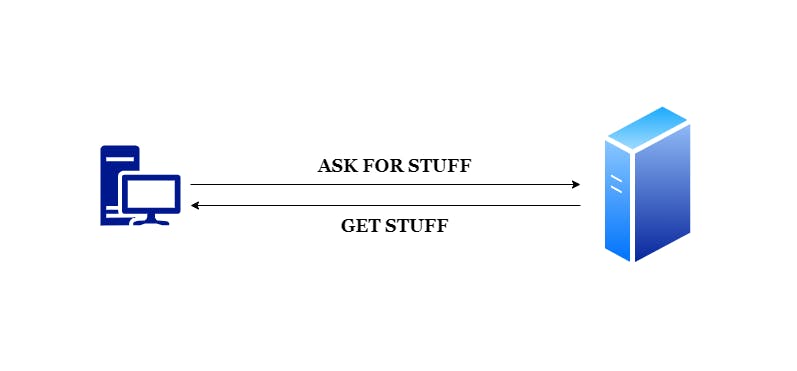

Traditionally, when people initially started building for the web, they used to follow a particular pattern. There used to be a simple client-server architecture. The browser, acting as a client, used to request for stuff. The server, on the other hand, used to deliver stuff as a response to this request from the said client. This was quite straight-forward. People would create assets, put them somewhere on some server. Then when someone came along with a client and asked for those resources, the server would simply deliver it to them. Quite straight and simple. This model was one of the very early adopters of the KISS principle. Unfortunately this didn't last for long. We soon started running into quite some limitations. The resources uploaded on the server were quite static. This rendered the overall user experience quite static as well.

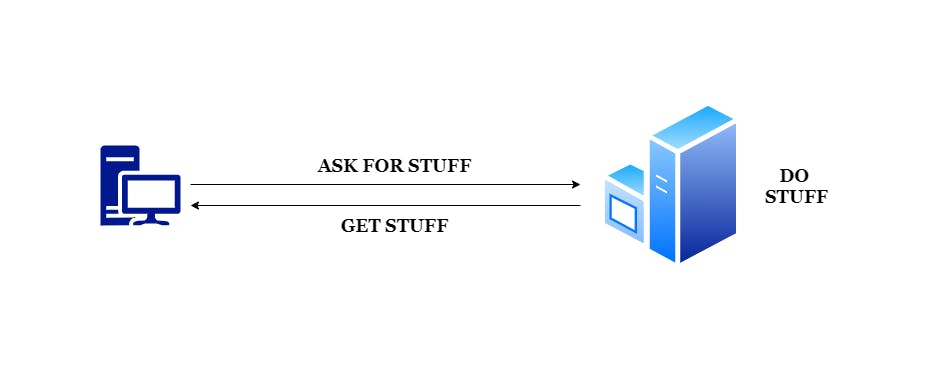

This lead to the requirement of adding some dynamism at the server level. So now, instead of just responding to requests from clients, the servers were now able to 'do stuff' as well. This logical component which was now added at the server level, enabled servers to execute some scripts based on every request received and generate a view in turn which was then returned as a response to the client. This meant that requests could now be served on the fly.

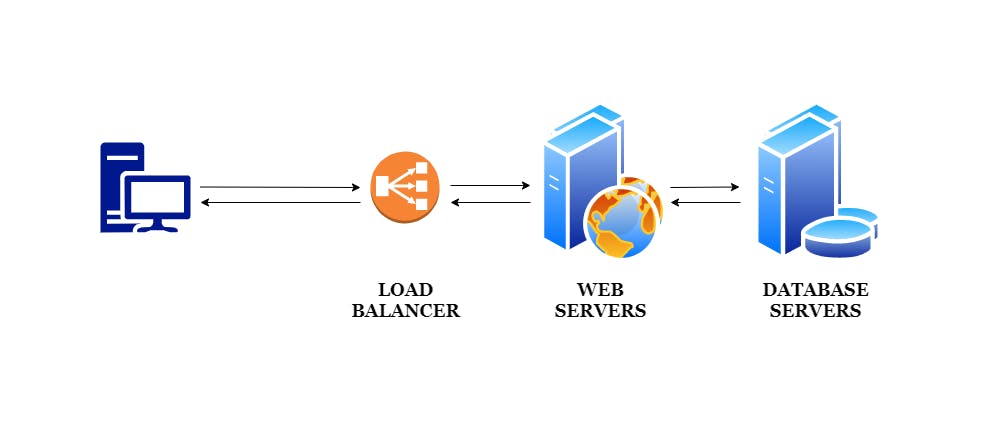

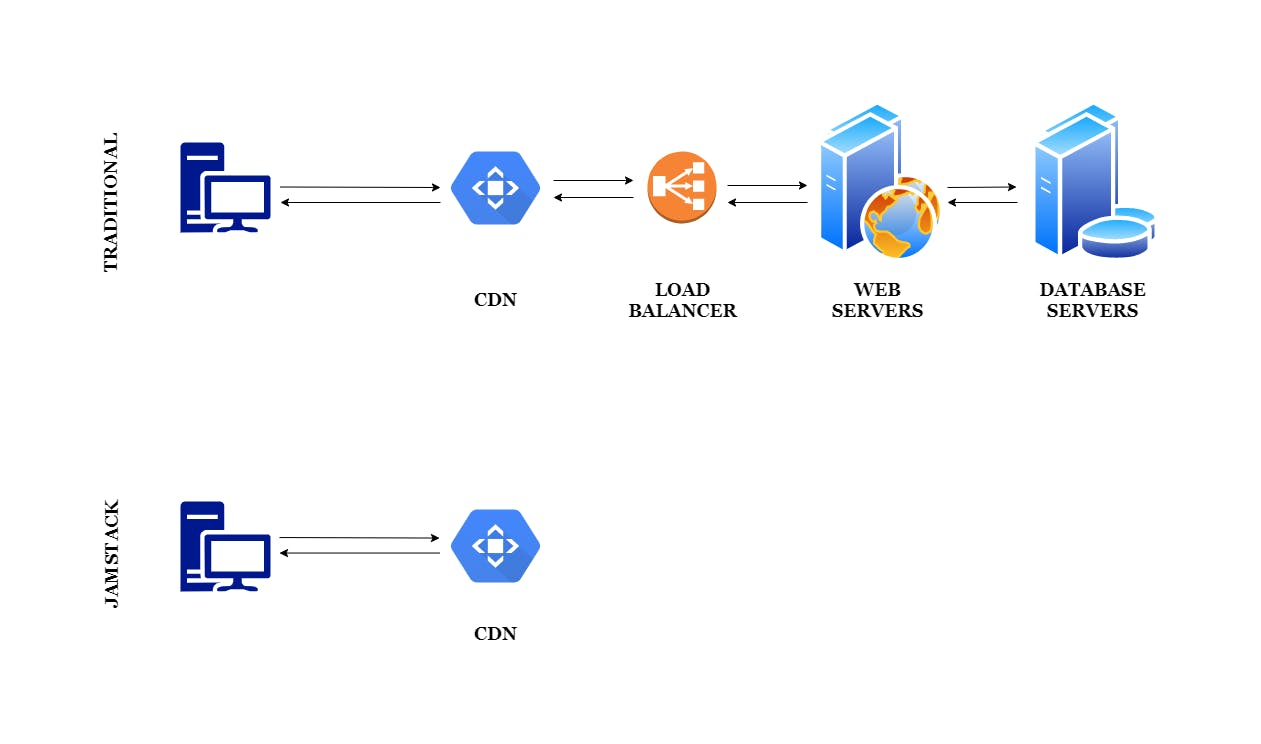

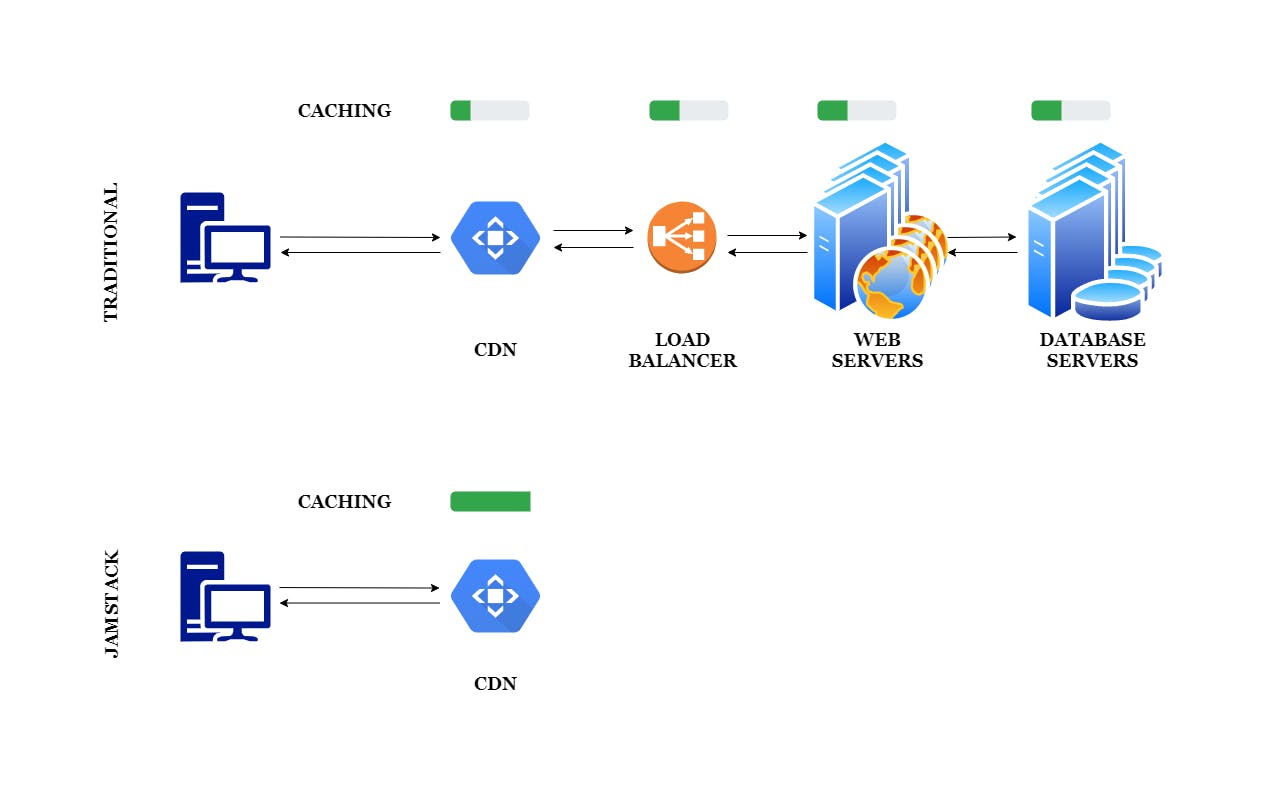

One of the unintended side effects of the above evolution meant that it became mandatory for servers to have enough capacities and enough horsepower to meet the ever-increasing demands of rapidly developing clients. It meant that the server be separated in different types of components, such as: load balancers, database servers, etc. Each of these components had individual responsibilities. The load balancer was trusted with routing the incoming load in the sense of traffic, to multiple highly available, highly redundant servers so that the request is served at the earliest. These highly redundant, highly available servers could then in turn query the database servers which held the data and helped in constructing the views.

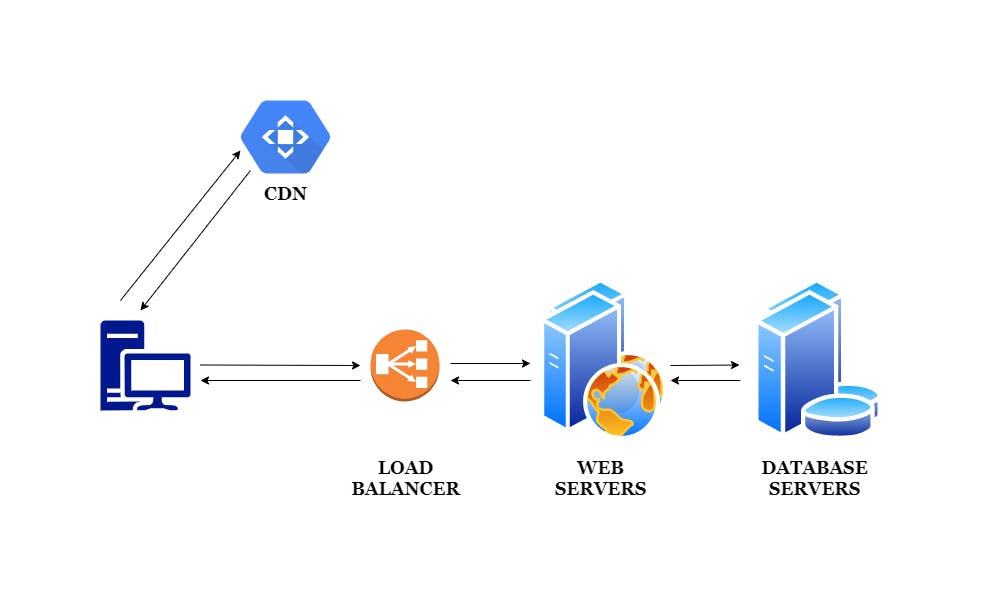

We then discovered that there are some assets that are mostly static, and that we do not need to generate them again and again for every request. This lead to the rise of Content Delivery Networks a.k.a. CDNs. These CDNs were strategically geo-located in such a place that those assets could be directly served from these CDNs without querying our traditional infrastructure for every request.

All this infrastructure, over time, though very sophisticated, got a bit complicated. And not just that, but over time, our browsers got more capable. This means that we can use these browsers to great effect. Also, as a consequence of building so many sites for such a long time, we as an industry have matured our processes and we've really got a lot better at the way that we build sites and the way that we deploy them. This maturing of processes paved way to better tooling. Better tooling enabled us to generate our code, manage our code and deploy our code in new and improved ways.

This stemmed the concept of having a collection of technologies which we can collectively use, called as 'stacks'. In simple words, a stack is nothing but layers of technologies which help us in delivering our applications. These 'layers' of technologies are nothing but a collection or a set of tools that help us ensure that our site is delivered as we desire.

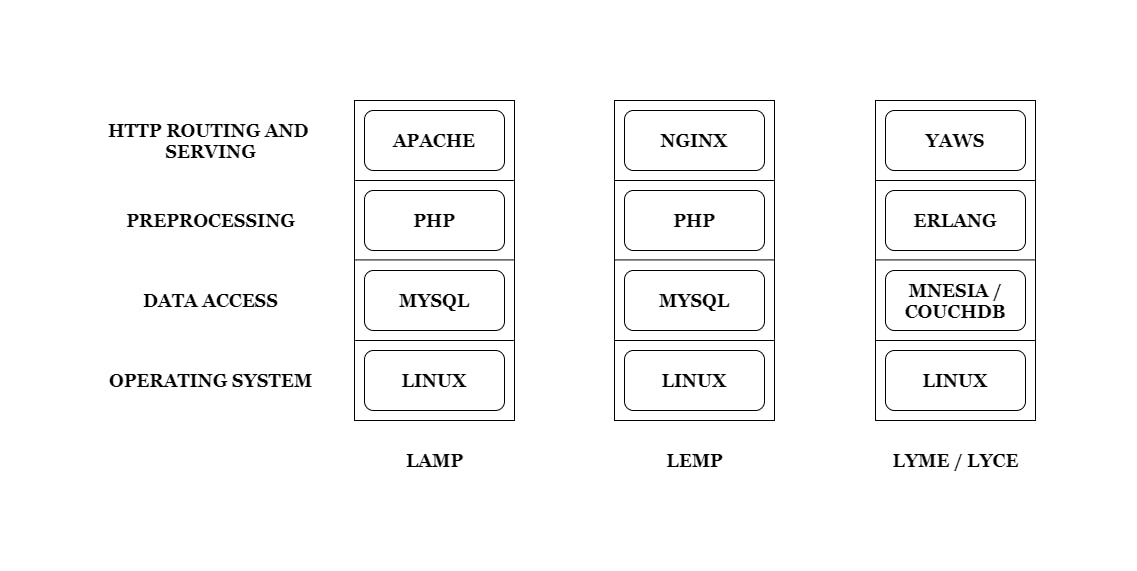

Over time, we've developed quite a number of stacks such as LAMP, LEMP, MEAN, PERN, LYME / LYCE, etc. All these stacks use different sets of tools and different sets of technologies.

In all these stacks, there are a few common components. Usually, there is an Operating System for building our application on. It could Linux, Windows, etc. There is another layer for data access and the services attributed with it. It can be MySQL, MongoDB, CouchDB, etc. In addition to this, there is a layer for pre-processing, which contains actual scripting to handle the logic and assemble the views. This layer can be PHP, Node.js, Erlang, etc. Finally, there is a layer to ensure HTTP routing and serving. It can be Apache, Nginx, etc.

Jamstack

I believe Jamstack is best described as per it's website:

Fast and secure sites and apps delivered by pre-rendering files and serving them directly from a CDN, removing the requirement to manage or run web servers.

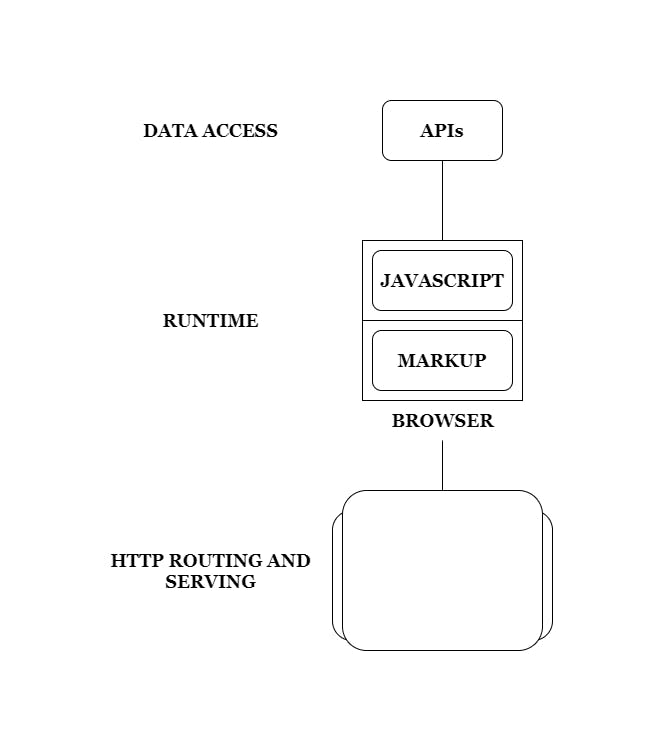

To put it simply, Jamstack is nothing but an approach to delivering applications. The word, Jamstack, comes together because of Javascript, APIs and Markup.

Unlike the stacks that we saw above, Jamstack looks quite a bit different. The responsibility of data access is taken care of by APIs. The database is now kind of abstracted, and we do not need to deal with it directly. We call these APIs using Javascript which interacts with Markup. Both of these together, i.e. Javascript and Markup co-exist in the browser and constitute for our Runtime. They take care of the pre-processing responsibility of our traditional stack. The HTTP routing and serving responsibility is taken care of by static servers and CDNs.

To sum up, Jamstack is about things that are pre-rendered. It is about leveraging the browser's capabilities. It is about delivering applications and sites without having to deal with a web server and it's various configurations.

If we look at how this architecture would look now in the context of client and server, it would seem as if we are going back to where we started from: the simple client-server architecture. But there's more to it than what meets the eye. The return to this simplicity was actually started a long time ago when the legendary Aaron Swartz wrote a blog post about the same titled Bake, Don't Fry in as early as 2002. In his blog post, he hints at a simplistic architecture where we do not have to build our response for every request that we receive, but instead have our response pre-rendered ahead of time so that it's ready to be returned as-is every time that we receive a request. Hence, baking the response ahead of time as opposed to frying it on demand for every request.

Motives

The primary advocate for pre-rendering is to lighten the load at servers by doing the work beforehand. This means having pre-generated assets which are ready-to-deploy. It eliminates all the complexities of all the moving parts which are involved in the client-server architecture that we've grown to evolve. In case of our traditional architecture, during a deployment we need to make sure that all the components are updated accordingly, namely: the load balancers, the database servers, the web servers, the CDNs, etc. It requires an action to be taken for each of these components. The more number of components, the more overhead in terms of taking actions for every component involved in the deployment process. On the other hand, this is extremely simplified in case of Jamstack. Since all the assets are pre-generated, we can deploy the whole application or website directly at CDNs.

Security

One of the main advantages of Jamstack is it's security. It majorly reduces the surface area of the attack. The more components that we have to deal with, the more care we have to take of ensuring security of all those components in our infrastructure.

Performance

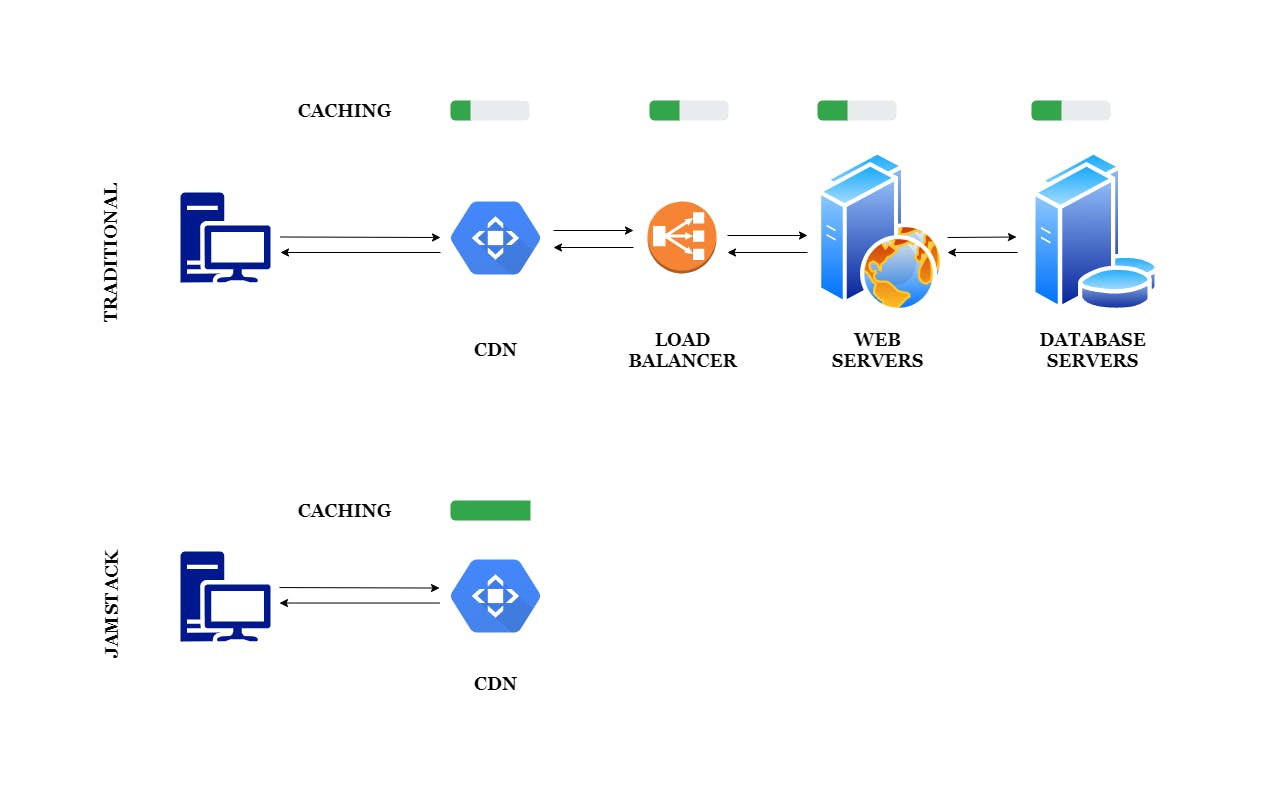

Different stacks deal with performance with different ways. It is a well known fact that traditional stacks add static layers in order to improve performance. Static layers are nothing but caching mechanisms introduced at various components of the stack. Every level of the stack attempts at reducing the dynamic response by predicting the most commonly used assets or resources and caching the same. This logical separation adds an overhead and complexity to manage deployment and to decide what needs to cached and what needs to be left dynamic.

This is in contrast to Jamstack. Every time we do an update on the CDN, we are effectively updating the entire cache. Since our whole deployment consists of pre-generated assets, we need not need to take a call on what needs to be cached and what needs to be dynamic.

Scaling

In case of traditional stacks, more often than not, more infrastructure is added in order to scale. Adding infrastructure occurs in the form of either horizontal or vertical scaling. Horizontal scaling means that we scale by adding more machines in our pool of existing resources. On the other hand, vertical scaling means that we scale by adding more power, in terms of CPU, RAM, etc. to an existing machine. The result of this is that more resources and more servers means more cost. Our deployments get more complex and hence increases the overhead of replicating everything across our scaled environment.

In case of Jamstack it's a different scenario. Since our assets are already pre-generated and cached by design and by default. There are no on-demand requests, hence no on-demand work needs to be done. And since everything is delivered from Content Delivery Networks (CDNs) our responses are quick and efficient. CDNs, from their very inception are designed to handle things at high load. This hugely benefits us in case of Jamstack.

Conclusion

If we pay close attention, we might realize that the architecture that we're trying to achieve here may appear like we're going back to where we started from:

This can raise a few eyebrows and it is fair. The primary difference between both of them is that we're going back to that initial simplistic architecture after we've evolved. Now, we're not just going back to as it was before, but we have a host of tools to our advantage which we've been learning and building over the time. Things aren't static in the same way anymore. There are various enablers such as: static site generators which pre-generate our assets at build time rather than request time; tooling and automation of our deployment processes; browser capabilities; services and the API ecosystem as a whole.

Having said that, while Jamstack is great for quite a number of scenarios like building an informational website, an application providing case studies, blog posts, etc., it is, in no way, the holy grail. There still exists a vast number of scenarios such as sites serving dynamic content like e-commerce applications, in which building an application or a website using our traditional stack is still clearly a far better option. Jamstack is more suitable for flexible static sites, and for those who don't want the overhead of maintaining complex applications such as CMS. There are already quite a few use-cases where people are considering migrating to Jamstack over traditional CMS like WordPress, etc.

At last, like every other technology, one should carefully consider which technology stack would best serve one's application and adapt accordingly. This is the reason why this post is not titled as 'A Modern Replacement To Traditional Web Stacks' but rather 'A Modern Alternative To Traditional Web Stacks'.